For any product, the best insights don’t come from dashboards, but from the voices of customers themselves.

In the competitive digital finance sector, the leading players are proactively mining channels for unfiltered customer feedback to drive innovative product solutions at scale.

Those customer voices, however, tend to be scattered.

That's why we built this Social Listening System for a Latin American bank to better understand their customers.

It will help you, too.

Every day, thousands of users share their experiences on platforms like Instagram, X (Twitter), and App Store reviews. These messages reflect everything from bugs and usability frustrations to feature requests and delight.

Yet, the feedback about the bank was fragmented, multilingual, informal, and hard to track.

What makes the challenge even more pronounced?

Traditional social listening tools could surface sentiment, but not technical understanding.

Our client needed something deeper, a system that could actually quantify user feedback and map to their products.

We designed a custom AI solution we call a LightRAG-based Social Listening System: a framework for transforming fragmented, noisy user feedback into structured, explainable insights.

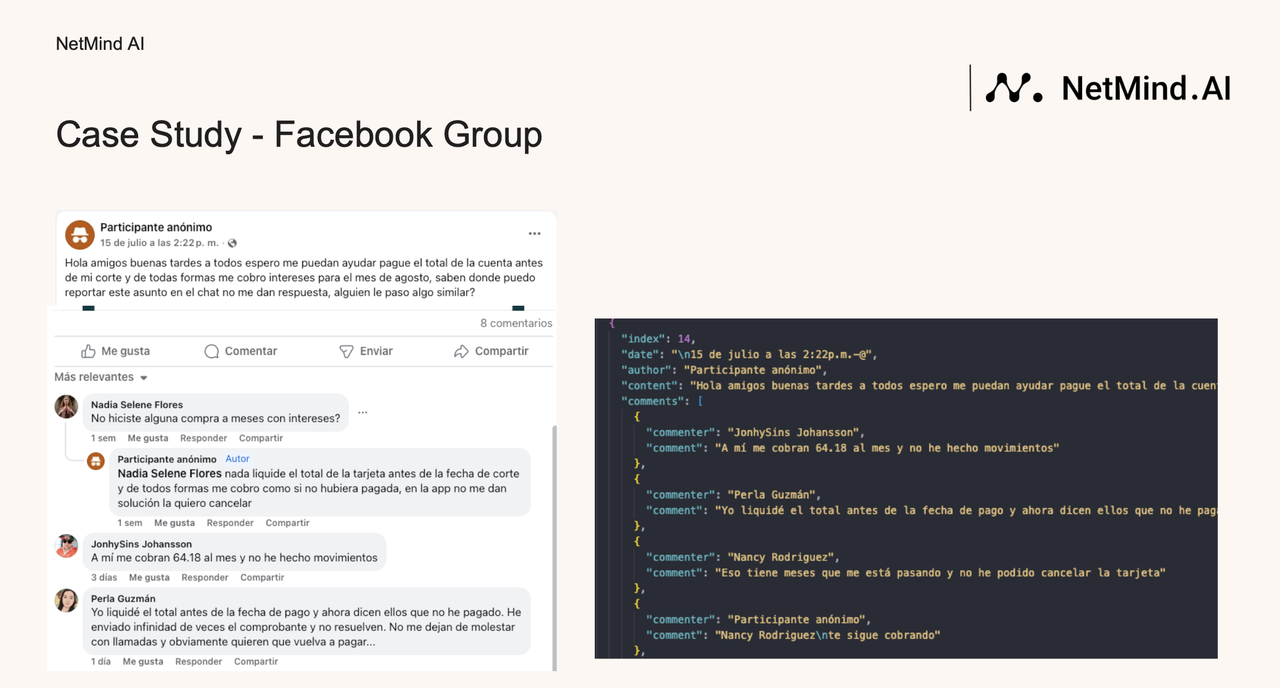

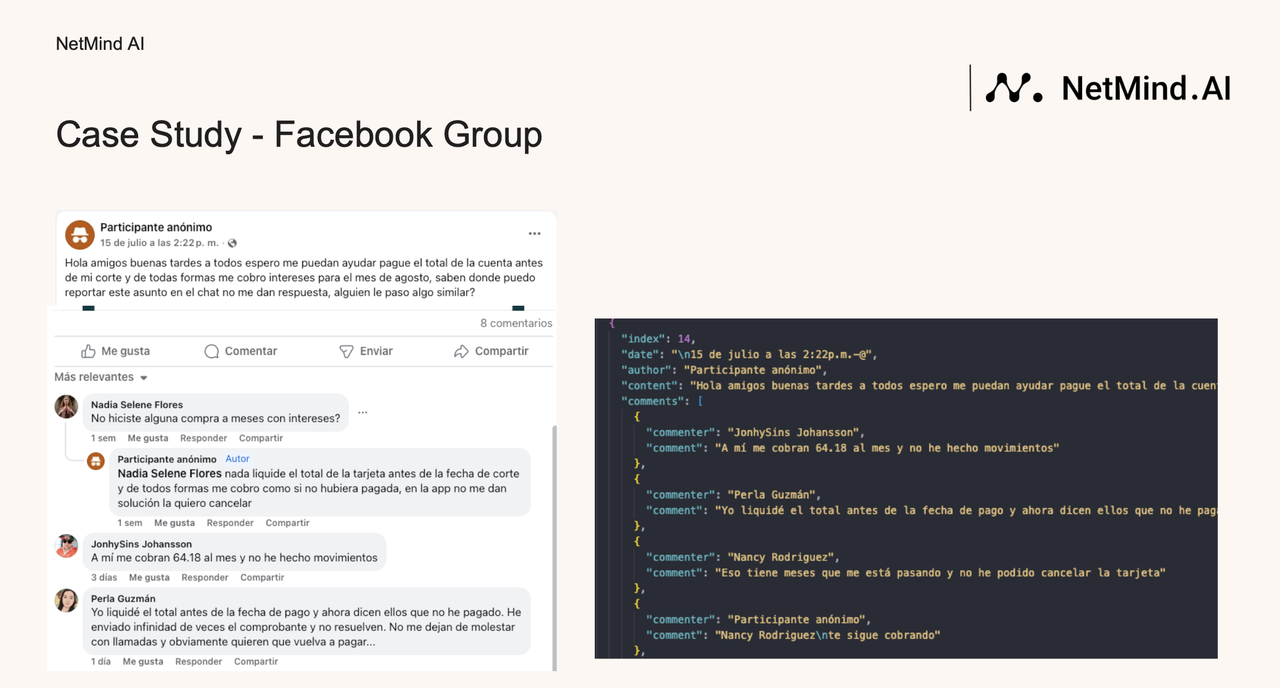

Data acquisition and normalization: Our engineering team built multi-platform pipelines that captured feedback text with contextual signals, which included author names, timestamps, app versions, developer responses, and even screenshots of the feedback posts to preserve metadata fidelity.

Once the data was collected, we used LLMs to automatically annotate each entry.

Every post or review was categorized by its intent, such as bug report, feature request, or positive feedback. This automated labeling transformed raw chatter into a searchable, analyzable corpus.

But that is not enough.

To further enhance data quality, we applied fuzzy matching to merge duplicated content across platforms.

This de-duplication ensured that similar feedback (e.g.multiple users reporting the same login issue in slightly different words) was aggregated into a single, representative record.

On top of this, we leveraged LLM-based profiling to generate concise entity cards for key elements such as features, user types, or recurring issues, each including a name, type, and short summary.

What happened next?

These entity profiles became the building blocks for the next layer of our system: graph-based retrieval and insight generation.

Graph-based indexing through LightRAG: this mapping truly unlocks the value of our system. Instead of treating each comment as an isolated text block, our team adopts LightRAG to map relationships of users, reviews, app versions, and developer replies along temporal and thematic dimensions.

The dual-path retrieval of LightRAG is applied on both:

On top of this graph, we built a RAG-powered assistant capable of answering natural-language questions, always with verifiable citations. Each insight the assistant provided linked directly back to the original screenshot, ensuring transparency and no hallucinations.

LightRAG is an advanced version of Retrieval-Augmented Generation (RAG) that helps large language models find and use information more intelligently. Unlike traditional RAG systems that treat data as flat text, LightRAG uses graph structures to represent knowledge as networks of connected concepts, allowing the system to understand relationships between pieces of information. It features a dual-level retrieval process that searches both detailed facts and higher-level concepts, improving the depth and relevance of responses. Moreover, LightRAG includes an incremental update mechanism that keeps the system up to date as new information is added.

With our practice, what emerged was a feedback system that truly thinks like a product manager.

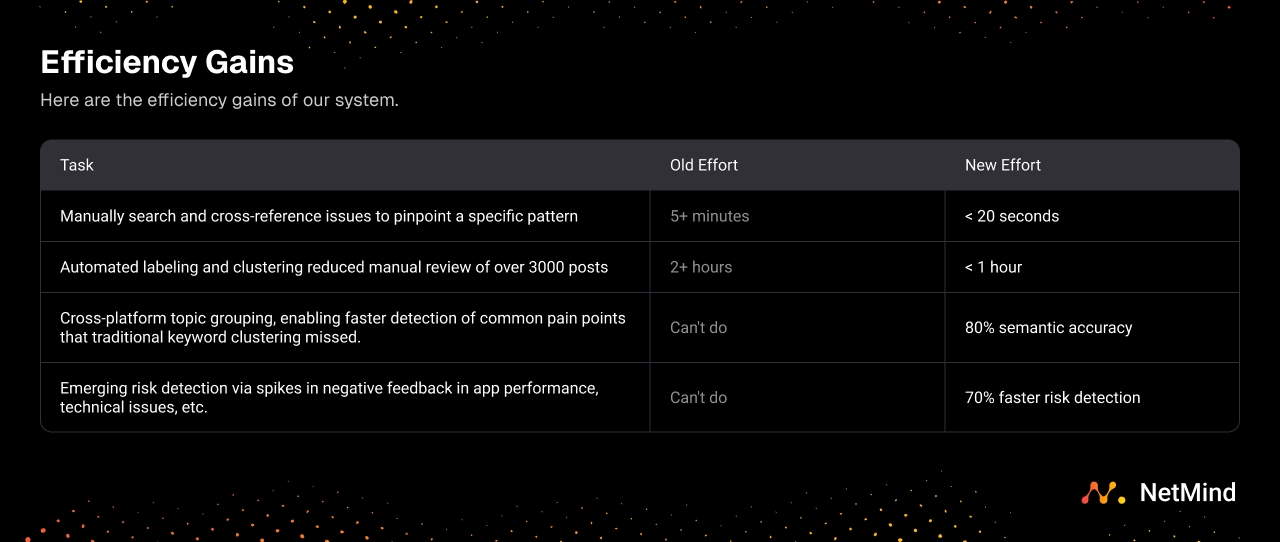

Here are the efficiency gains of our system.

| Task | Old Effort | New Effort |

| Manually search and cross-reference issues to pinpoint a specific pattern | 5+ minutes | < 20 seconds |

| Automated labeling and clustering reduced manual review of over 3000 posts | 2+ hours | < 1 hour |

| Cross-platform topic grouping, enabling faster detection of common pain points that traditional keyword clustering missed. | Can't do | 80% semantic accuracy |

| Emerging risk detection via spikes in negative feedback in app performance, technical issues, etc. | Can't do | 70% faster risk detection |

Each insight from our system is auditable.

Every claim could be traced back to the original comment, post, or app store review, with attached screenshots.

What's even better?

This made collaboration between product, customer support, and compliance far smoother, realizing a full evidence-based decision loop.

Because the client operates in a regulated financial environment, we built the entire pipeline to run in private deployments. All records stayed within the client’s infrastructure to ensure data sovereignty.

In short, it’s powerful AI built responsibly, with governance and security at its core.

This social listening system is a landmark in the journey toward proactive customer understanding.

It provides the crucial "why" that informs operational actions, such as what to do in collections to paint a complete picture of how product experience shapes user decisions.

For you & me, these interconnected capabilities mark the next frontier: building end-to-end solutions where a shared, explainable data layer turns individual insights into a unified strategic advantage.

Book a demo with our SVP Stacie Chan to turn our AI technology into your AI solutions!

User-Agent: